EdTech leaders face rising pressure to deliver AI-driven learning while hiring slows and talent stays scarce. Many teams struggle to add AI-native developers fast enough without increasing long-term risk or losing control. This challenge is most visible for leaders responsible for delivery, compliance, and platform stability.

How can teams adopt AI when the right skills are hard to hire and expensive to retain. Through it staff augmentation in education, organizations can add AI-native capability while keeping ownership inside the team. The content below explain why this model works, where it fails, and how leaders can use it to strengthen delivery decisions.

Table of Contents

Key Takeaways

- Hiring AI-native developers through it staff augmentation in education helps EdTech teams add critical skills without long hiring cycles.

- This model supports AI adoption while keeping product, data, and delivery control inside the organization.

- AI-native talent creates value only when guided by clear learning and product goals.

- Cost outcomes depend more on scope clarity than on developer rates.

- Augmentation works best as a planned operating choice, not a reactive fix.

Further Reading

What It Means to Hire AI-Native Developers

AI-native developers are not general engineers who later add AI skills. They build systems where data, models, and automation are part of the foundation. In EdTech, this often includes adaptive learning logic, recommendation systems, and learning analytics.

Hiring this talent through staff augmentation allows teams to embed AI capability into existing workflows. External developers work inside internal teams. Product and learning decisions remain internal.

This model works only when leaders are clear on how AI supports learning goals. AI-native skills strengthen focus when direction is clear and expose gaps when it is not.

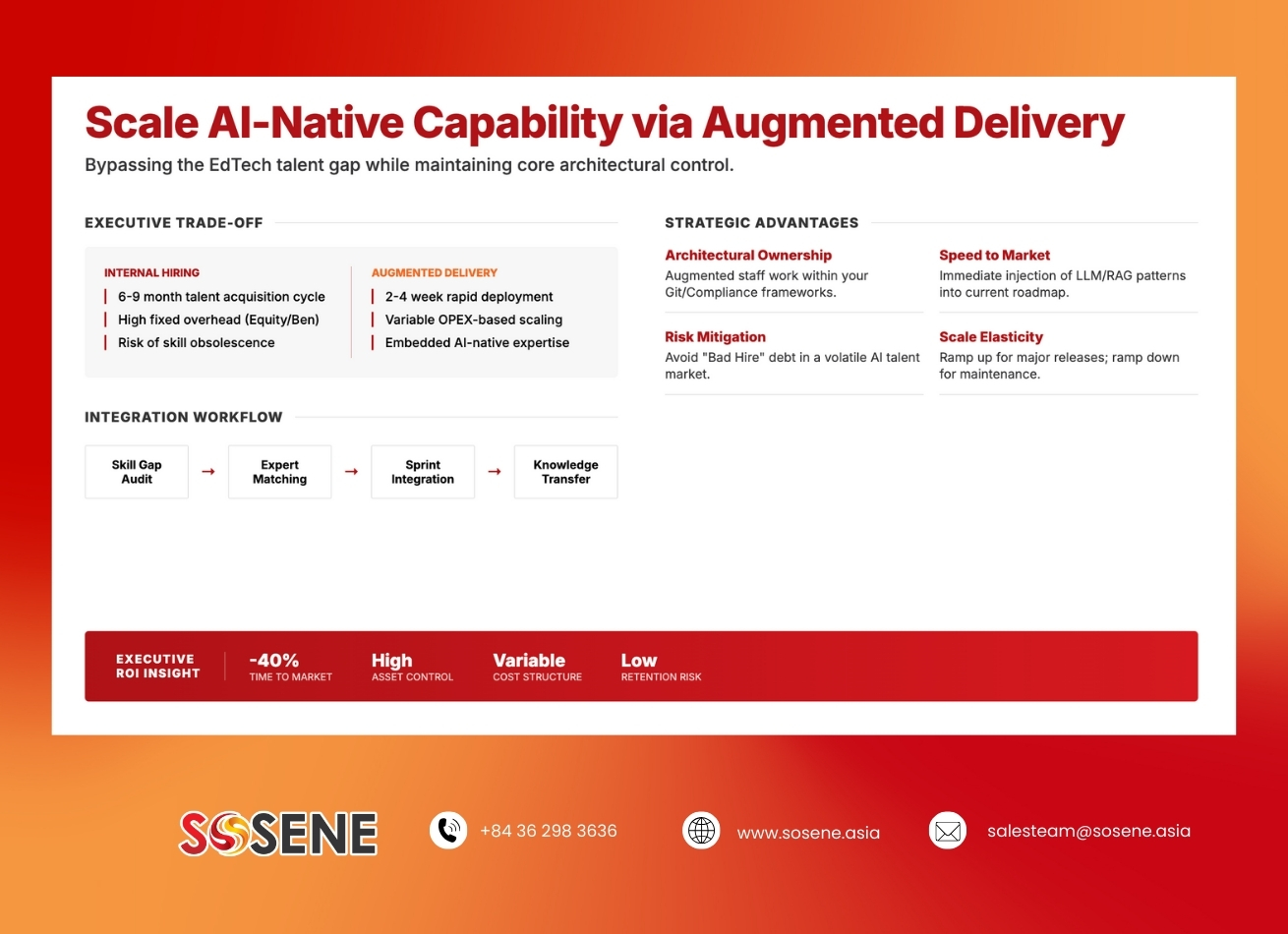

Why Staff Augmentation Fits AI Work in EdTech

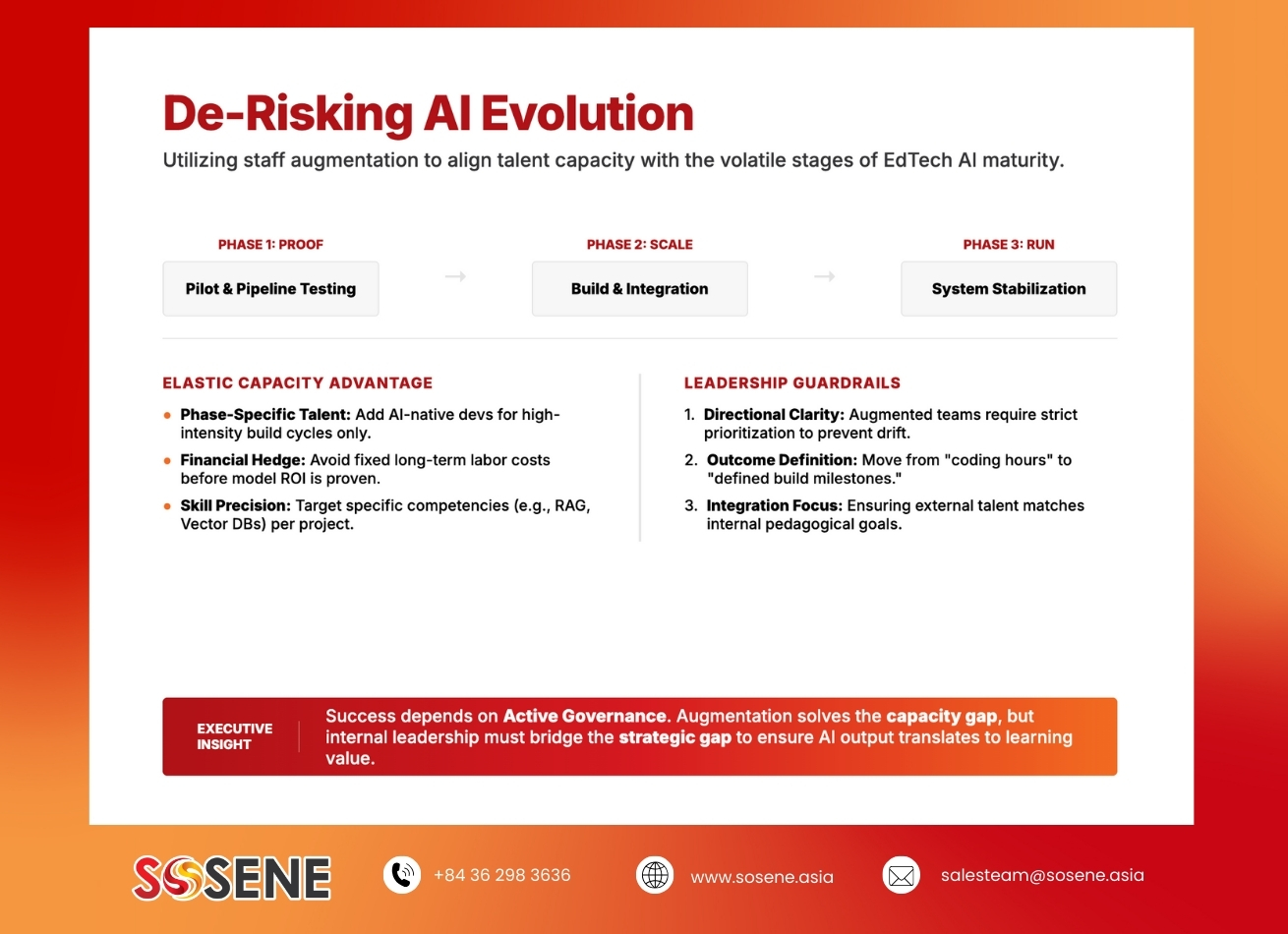

AI initiatives in education often start as focused efforts. Teams test models, data pipelines, or personalization features. Hiring full-time roles before value is proven increases risk.

It staff augmentation in education allows leaders to add AI-native developers for defined phases. Capacity grows during build and learning cycles. It reduces once systems stabilize. This reflects how AI work evolves in practice.

The trade-off is leadership effort. Augmented AI teams need direction, priorities, and clear limits. Without these, even strong talent underperforms.

Control, Data, and Responsibility

AI work in education carries risk. Data privacy, bias, and transparency matter. Many leaders avoid outsourcing AI work because it weakens control over these areas.

Staff augmentation keeps AI-native developers inside the organization’s governance structure. Internal leaders control data access, model use, and decision logic. This preserves accountability while increasing delivery capacity.

Control also brings responsibility. Leaders must stay involved in AI decisions and outcomes.

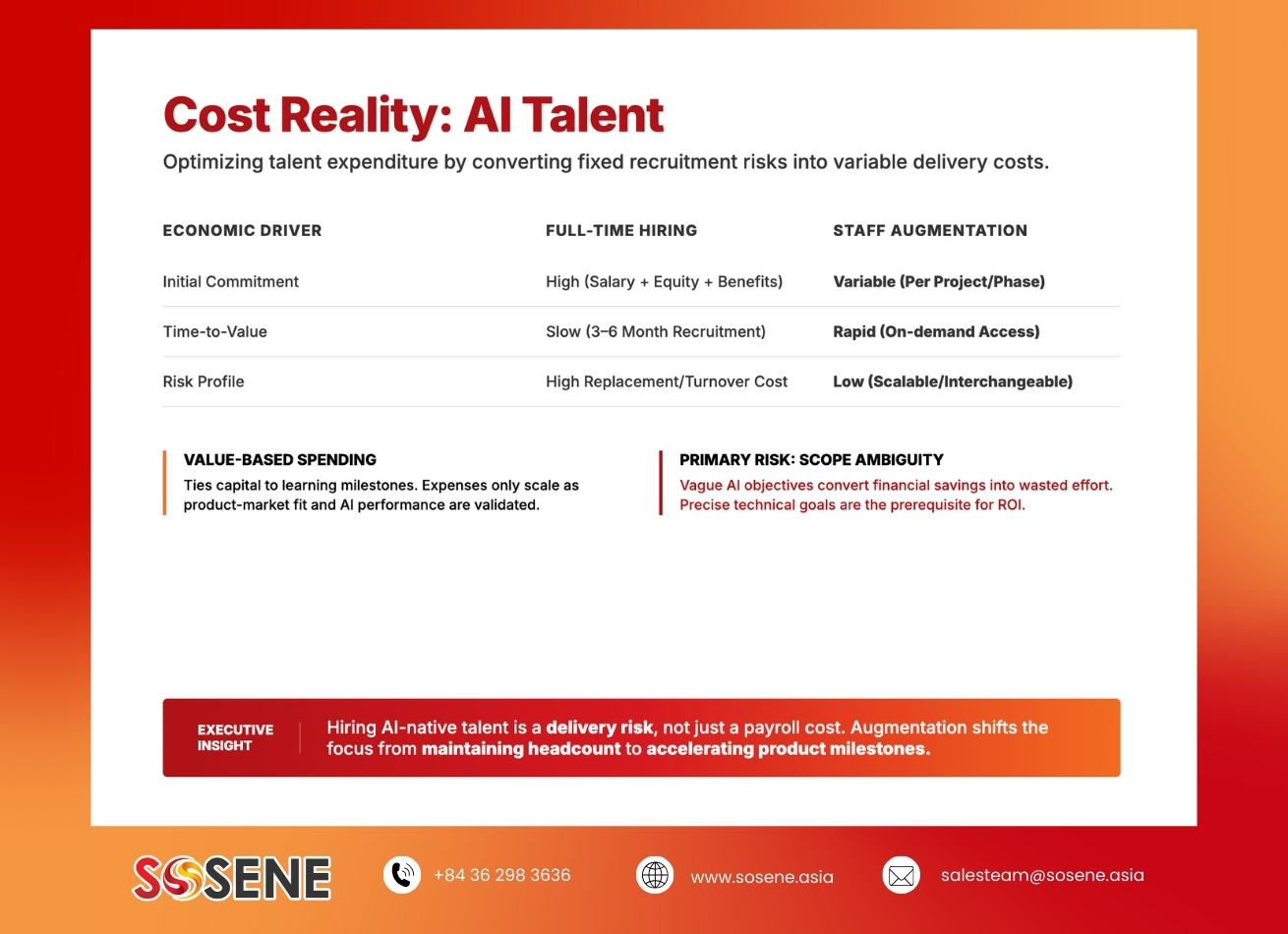

Cost Reality of Hiring AI-Native Talent

AI-native developers are costly to hire full time. Beyond salary, hiring includes long recruitment cycles and high replacement risk. For many EdTech teams, this creates pressure before value is clear.

Staff augmentation ties AI cost to delivery. Spending follows learning milestones and product progress. Leaders gain clearer cost boundaries.

The largest risk is unclear scope. Vague AI goals often lead to wasted effort and delay.

Impact on Learning Products

When used well, AI-native developers expand what EdTech teams can build. Learning paths become adaptive. Feedback becomes timely. Analytics become useful for decisions.

Augmented AI talent can also reduce load on internal teams. External developers focus on model development and integration. Internal teams focus on pedagogy and outcomes.

AI supports learning only when guided by intent. Augmentation does not replace educational leadership.

Execution Discipline Makes the Difference

Hiring AI-native developers through it staff augmentation in education requires discipline. Roles must be clear from the start. Decision rights must be defined. Communication must stay simple and regular.

Onboarding matters even for senior AI talent. Education platforms have rules, constraints, and context. Cultural fit matters as well. AI-native developers must work with educators and product leaders, not apart from them. Teams that treat augmentation as a partnership tend to see stronger results.

EdTech leaders often explore hiring AI-native developers when internal teams reach their limits. The real question is not access to AI skills, but how those skills fit the organization.

Sosene works with EdTech teams to embed AI-native developers through staff augmentation while preserving ownership and governance. The focus remains on learning outcomes, data responsibility, and sustainable delivery. Leaders can request a strategic discussion to assess whether this model supports their AI roadmap.

Conclusion

Hiring AI-native developers is now a strategic decision for EdTech leaders. It shapes how teams learn, adapt, and deliver over time.

It staff augmentation in education offers a way to add AI capability without long-term risk. When guided by clear goals and strong leadership, it balances speed with control.

For teams building serious learning products, augmentation works best when treated as part of the operating model rather than a temporary experiment.

FAQs

When should EdTech teams hire AI-native developers through augmentation?

This approach fits when AI work is important but still evolving. It allows teams to move forward without committing to permanent roles too early. Strong product leadership improves results.

What risks come with augmented AI teams?

Misalignment is the main risk. Without clear goals, AI work can drift. Data access, privacy, and compliance also require close control.

How does this compare to hiring full time?

Full-time hiring brings long-term cost and replacement risk. Augmentation allows learning and delivery before commitment. Scope clarity matters more than cost comparison.

Why not outsource AI development?

Outsourcing reduces control over data and decisions. Staff augmentation keeps AI work inside internal governance structures.

How should leaders evaluate AI augmentation partners?

Leaders should look for education experience and delivery discipline. Communication and governance quality matter more than technical claims.